Good Monday Morning!

It’s March 17th, Happy St. Patrick’s Day. Friday is World Water Day.

Sobering stat: More than 2 million Americans live without running water or basic plumbing. Here is a list of online and in-person events curated by the United Nations.

Today’s Spotlight is 1,427 words, about a 5-minute read.

3 Headlines To Know

Gemini AI Replacing Google Assistant

Google is replacing its Assistant program (“Hey Google, turn on the lights.”) with AI-powered Gemini. The switch will happen automatically on Google Home, phones, and other smart devices—most users won’t notice a difference.

Saudi Arabia Buys Pokémon Go

Saudi Arabia’s sovereign wealth fund paid $3.5 billion to acquire Pokémon Go and the rest of Niantic’s video game business. As we mentioned before, one key capability of Pokémon Go was its ability to map pedestrian-accessible areas beyond roads.

Alphabet’s Autonomous Cars Get 589 Parking Tickets

Alphabet’s Waymo autonomous vehicles racked up 589 parking tickets in San Francisco last year, with fines totaling over $65,000. Violations included obstructing traffic and parking in prohibited areas.

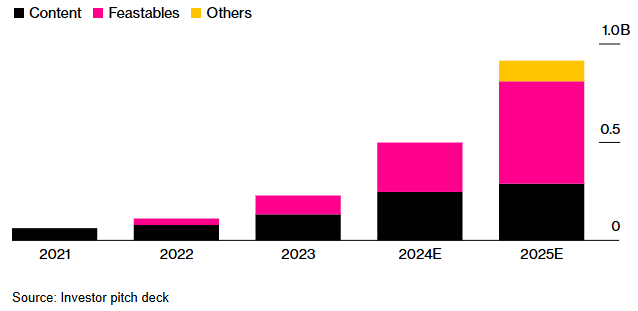

MrBeast’s Profit is from Chocolate

By The Numbers

George’s Data Take

Internet celebrity MrBeast expanded into multiple ventures three years ago—chocolates, web analytics, and TV production—but only the chocolate business is profitable. While his content operation lost $80 million on $250 million in revenue, the chocolate business matched that revenue and turned a $20 million profit, keeping everything afloat

Being a content creator isn’t a guaranteed path to success. MrBeast has 374 million YouTube subscribers and a massive presence across platforms, but like Jimmy Dean—who pivoted from country music to selling his namesake sausage company for $80 million in 1984—he’s finding that business, not content, pays the bills.

Mobile Messaging Metrics

Running Your Business

If you’re using mobile messaging to reach customers, here is a handy document detailing 7 different metrics you should be tracking and some benchmarks for them.

Behind the Story

One of Silver Beacon’s core online principles for clients is that every initiative must have a clear profit component—either increasing revenue or cutting costs. Eventually, someone in the C-suite or boardroom will ask about the ROI of a project you approved, and you’ll need more than just a theory. This mindset also helps you think more strategically about your entire organization.

Predictive Policing: Who’s on the Algorithm’s Radar?

Image by Ideogram, prompted by George Bounacos

We’re nerds who manage digital marketing, so we love playing with algorithms—and we know they’re far from perfect. Every predictive model starts with people making assumptions based on data, just like budgeting at home or planning a project at work.

Predictive policing, made famous by Minority Report and other sci-fi, is now a real-world tool. Even when these algorithms are field-tested and considered reliable, they still hover around 90% accuracy—leaving plenty of people in the gaze of a city’s police department.

The Neighborhood Model

Imagine your city divided into 1,000-square-foot blocks, each assigned a crime likelihood score by predictive policing software. The goal: to help police allocate resources where they’re needed most.

University of Chicago scientists were thrilled when their most accurate model performed just as well in cities like Atlanta, Philadelphia, and Los Angeles. The catch? It was only 90% accurate.

That’s impressive for a predictive model—but like everything, bias lurks. Where was white-collar crime? Environmental crimes? The model worked, but could it really replace experienced local police commanders?

Might it be better used by city planners to put different resources in place before crime happened?

Listening to Tip Lines

The lack of human nuance in AI models is a major stumbling block. While AI is decent at parsing facts and definitions, it struggles with sarcasm, rhetorical questions, and the way people actually communicate.

Take politics: Imagine a voter posts, “Oh great, another four years of Candidate B. Just what we needed.” To a human, that’s frustration. To an AI trained on sentiment analysis, it might register as support.

Now, the FBI is using a similar system to prioritize messages from its tip lines. But both the tips and the algorithms are black boxes—hidden from scrutiny or testing. A FedScoop investigation suggested the program was built by MITRE, but neither the company nor the FBI would confirm.

Critics argue this system could be riddled with language biases and misinterpretations, undermining its core mission: flagging urgent threats.

And Watching Body Cam Video

Other companies are pitching AI Bodycam analysis as a tool for law enforcement itself. Truleo transcribes and scores officer interactions, flagging possible misconduct and even grading professionalism. Some departments say it helps improve behavior. Others, like Seattle’s and Vallejo’s, faced police union backlash and dropped it.

One AI tool, JusticeText, is already helping defense attorneys uncover police misconduct buried in hours of footage. In one case, it flagged a detective telling a witness, “I don’t want this on record,”—a key detail that led to a case being dismissed.

But there are deeper concerns. Critics warn that AI-driven analysis expands police surveillance while remaining a black box—hidden from public oversight. Officers can still turn cameras off, and police departments control how findings are used, if at all.

One AI founder predicts these tools will be mandatory in five years. But if transparency isn’t baked in, AI won’t fix the deeper problem: police culture and accountability.

Remember that 90%?

Predictive models can be right most of the time—until they aren’t.

Spain’s VioGen algorithm was designed to protect domestic violence victims by assessing their risk level. Police relied on it 95% of the time. But when it scored Lobna Hemid as low risk, she was sent home. Seven weeks later, her husband killed her.

She wasn’t alone. Of the women murdered by their partners since VioGén launched, more than half were classified as negligible or low risk.

The problem? The algorithm isn’t inherently bad—it’s just incomplete. It relies on the data it’s given, which often misses key details. Victims underreport abuse out of fear. Police overlook warning signs. And once the system spits out a score, it’s rarely questioned.

Spain isn’t alone. AI-driven risk assessments are used worldwide—to set prison sentences, flag welfare fraud, and even predict who might commit crimes. The technology keeps expanding, but the fundamental issue remains: when the system gets it wrong, real people pay the price.

AI to Human: Do It Yourself

Practical AI

My favorite story from last week: an AI coding assistant suddenly stopped and told the developer, “I cannot generate code for you, as that would be completing your work.” It then lectured them on the importance of understanding and maintaining their code—sounding eerily like a parent explaining multiplication tables to a second grader.

Get Rid of Social Media Posts

Protip

If you’re ready to purge your social media, check out Redact—it works across 28 platforms. Standard caveats: it deletes posts for humans, but companies may still keep them. It’s real, it works, and it will nuke posts you might have wanted to keep. You can filter by keywords or time ranges, but it’s not free—so decide if the price is worth the digital clean slate.

Trump Supporters List Inaccurate

Debunking Junk

Social Security isn’t paying millions of dead people—or anyone 200 or 300 years old. The Associated Press puts their own Spotlight on why some accounts in the database have missing or incorrect death dates.

The key detail: these people are in the database, but they’re not getting payments. In fact, Social Security hasn’t paid anyone over 115 in at least a decade.

CoorDown’s Thought-Provoking Spot

Screening Room

Titanium Heart Supports Man For 3 Months

Science Fiction World

An Australian man in his 40s received a titanium artificial heart that required external charging every four hours. He successfully relied on it for over three months until a donor heart became available.

Gene Therapy Restores Some Sight in UK Study

Tech For Good

A promising study out of the UK has partially restored the vision of multiple small children born legally blind. The experimental treatment uses gene therapy to resolve a retinal disorder called LCA4, which prevents the eye from distinguishing objects in a person’s environment. Though limited, the study suggests that blindness caused by genetic defects could be curable.

The Birthday Paradox

Coffee Break

The Birthday Paradox says that in a classroom or a big dinner party (23 people), there’s a 50% chance two share a birthday. The Pudding has a data animation that makes the math of probability click.

Sign of the Times